How Local VLMs Are Reshaping AI-Powered Machine Vision on Industrial PCs

Article Key Points:

- Traditional machine vision still handles core inspection tasks best.

- Local VLMs add contextual interpretation to machine vision workflows.

- Edge deployment on industrial PCs makes local VLM use more practical.

- Hybrid workflows can combine deterministic vision with flexible AI interpretation.

- Industrial PCs help connect vision, automation, storage, and operator workflows.

Industrial machine vision is entering a new phase as local multimodal models make interpretation at the edge more practical. For years, most production systems have relied on traditional machine vision for narrow, repeatable tasks such as defect detection, visual inspection, OCR and OCV, barcode reading, traceability, and pass/fail classification. That approach still remains the best fit for many high-speed industrial applications.

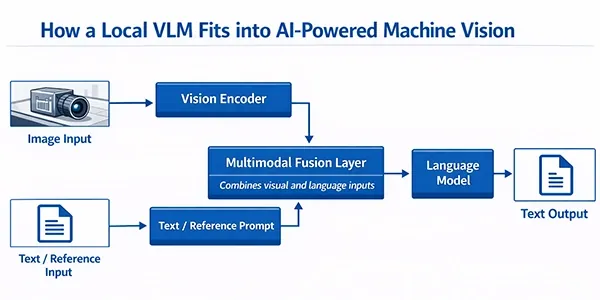

What is changing is not the need for traditional machine vision, but the workflow around it. Local vision-language models, or local VLMs, add a second layer of interpretation that can run directly on industrial PCs. If LLMs are language models that work with text, VLMs extend that idea by combining visual input with language-based understanding. Instead of replacing traditional machine vision, they help explain anomalies, interpret OCR results in context, support operator review, and make visual output more useful inside real production workflows.

Traditional Machine Vision Still Owns Many Core Tasks

Traditional machine vision still remains the right choice for many narrow, high-speed tasks such as presence checks, known defect detection, OCR or OCV on fixed formats, barcode reading, and pass/fail inspection. These workflows are usually easier to validate, optimize, and integrate when the target output is structured and repeatable.

That foundation is not going away.

What Local VLMs Add

A local VLM brings language-based interpretation to visual systems. In practice, that means a machine vision workflow can move beyond detection or classification and begin describing what looks abnormal, explaining why a result may matter, interpreting OCR output in context, or comparing a scene against a work instruction or expected process state.

That matters because many industrial workflows do not end with a model output. They also require explanation, review, traceability, or operator action. In many cases, the biggest immediate gain is not replacing inspection, but making the result easier for operators and technicians to understand and act on. Local VLMs extend the workflow rather than replace the underlying vision model.

Why Local Deployment Matters

In industrial environments, cloud-only AI is often the wrong fit. Machine builders and factory operators care about latency, connectivity, data control, reliability, and deployment simplicity. Running a multimodal model locally on an edge AI industrial PC makes this new layer more practical.

Local deployment can reduce latency for operator-facing tasks, improve control over sensitive production images, simplify deployment inside machines or line-side stations, and create more predictable behavior for industrial workflows.

A recent example is Google’s Gemma 4 family. Intel’s April 2026 announcement highlights Gemma 4 as a multimodal model family with text and image input, along with optimization paths across Intel hardware and software frameworks including OpenVINO. That does not mean every industrial deployment will use Gemma 4 specifically, but it does show how quickly local VLM workflows are becoming more practical on real edge platforms.

The Likely Winning Architecture: Hybrid

For many industrial applications, the best design is not all-traditional vision or all-VLM. It is a hybrid workflow.

This keeps the speed and determinism of traditional vision in the first stage, while adding a more flexible second stage only where interpretation adds value. For OEM teams, that can mean keeping a proven inspection pipeline in place while adding a new layer of flexibility without redesigning the entire machine vision architecture. The next section looks more closely at where that second stage helps most.

Where Local VLMs Add the Most Value

The clearest way to think about local VLMs is in three steps.

Step 1: Keep the core inspection deterministic.

Use traditional vision for the narrow task it already does well, such as detecting a defect, reading a code, checking a label position, or making a pass/fail decision.

Step 2: Add a local VLM where interpretation begins.

This is where the workflow moves beyond a raw model output and starts needing context, explanation, comparison, or operator-facing guidance.

Step 3: Use the industrial PC as the edge platform that brings both layers together.

The industrial automation PC connects cameras, I/O, storage, automation interfaces, and the software stack needed to turn visual output into a usable production workflow.

That is why the strongest fit for local VLMs is usually not the narrow inspection itself. It is the layer around that inspection.

| Where local VLMs help most | What they add beyond traditional vision |

|---|---|

| OCR, OCV, and label verification with context | Goes beyond text extraction to judge whether content looks complete, correctly placed, or consistent with the expected format. |

| Exception handling and anomaly explanation | Helps describe what looks unusual before an image is escalated to an operator. |

| Work-instruction and process verification | Compares a current image against an expected assembly, packaging, or process state in a more flexible way. |

| Operator assistance and review | Turns raw visual output into clearer, human-readable feedback that is easier to act on. |

| Variation-heavy environments | Reduces reliance on rewriting rules or reprogramming for every small product or packaging change. |

A good example is label inspection on a packaging line. A traditional vision system may already detect whether a label is present, read a barcode, and extract text with OCR. A local VLM fits better in the next step: helping determine whether the overall label appears incomplete, whether a warning block is missing, whether the printed layout looks inconsistent, or whether the package differs from the expected reference format.

That kind of second-layer judgment is where local VLMs become useful. It helps teams handle variation, support operator review, and reduce the need to rebuild inspection logic for every small packaging change. It is especially relevant for system integration teams supporting multiple product variants, packaging formats, or customer-specific inspection rules.

It also makes the operator’s job easier. Instead of sending only a fail flag or forcing engineers to create new rule sets for every minor variation, the system can return a clearer explanation of what looks wrong and why it may matter.

Why Industrial PCs Matter

This shift is not only about models. It is about the platform that makes hybrid workflows practical in production.

| Industrial PC capability | Why it matters in machine vision workflows |

|---|---|

| Camera connectivity and LAN or PoE | Supports image acquisition and distributed vision architectures. |

| Digital I/O and light control | Helps synchronize triggers, sensors, and inspection events. |

| Serial and industrial interfaces | Connects vision systems to legacy devices and automation equipment. |

| Local storage and logging | Supports traceability, buffering, and event review. |

| PLC and software integration | Connects inspection results to control and production workflows. |

| Rugged 24/7 operation | Makes deployment practical in real industrial environments. |

As inspection workflows become more layered, the industrial PC becomes more important, not less. It is where detection, interpretation, automation, and operator interaction can converge.

What This Means for Machine Vision and Automation Teams

The question is no longer only which model gives the best detection or inspection accuracy. It is also where interpretation belongs in the workflow, what should remain deterministic, what can run locally at the edge, and how visual results should be presented to operators or production systems.

That is why local VLMs matter. They do not just change inference. They change system design.

Conclusion

Traditional machine vision still remains the best choice for many narrow, high-speed inspection tasks. That is the production reality.

But local VLMs are beginning to reshape machine vision by adding a new layer of contextual understanding on edge IPCs. The most effective systems will likely combine both worlds: deterministic vision where speed and repeatability matter most, and local multimodal intelligence where interpretation adds value.

That combination may define the next machine vision workflow at the edge.

Looking at how local VLMs may fit into your next machine vision, visual inspection, or quality automation project? NODKA industrial PCs and automation PCs provide the edge computing foundation for inspection, OCR and OCV, traceability, and AI-enabled industrial workflows.